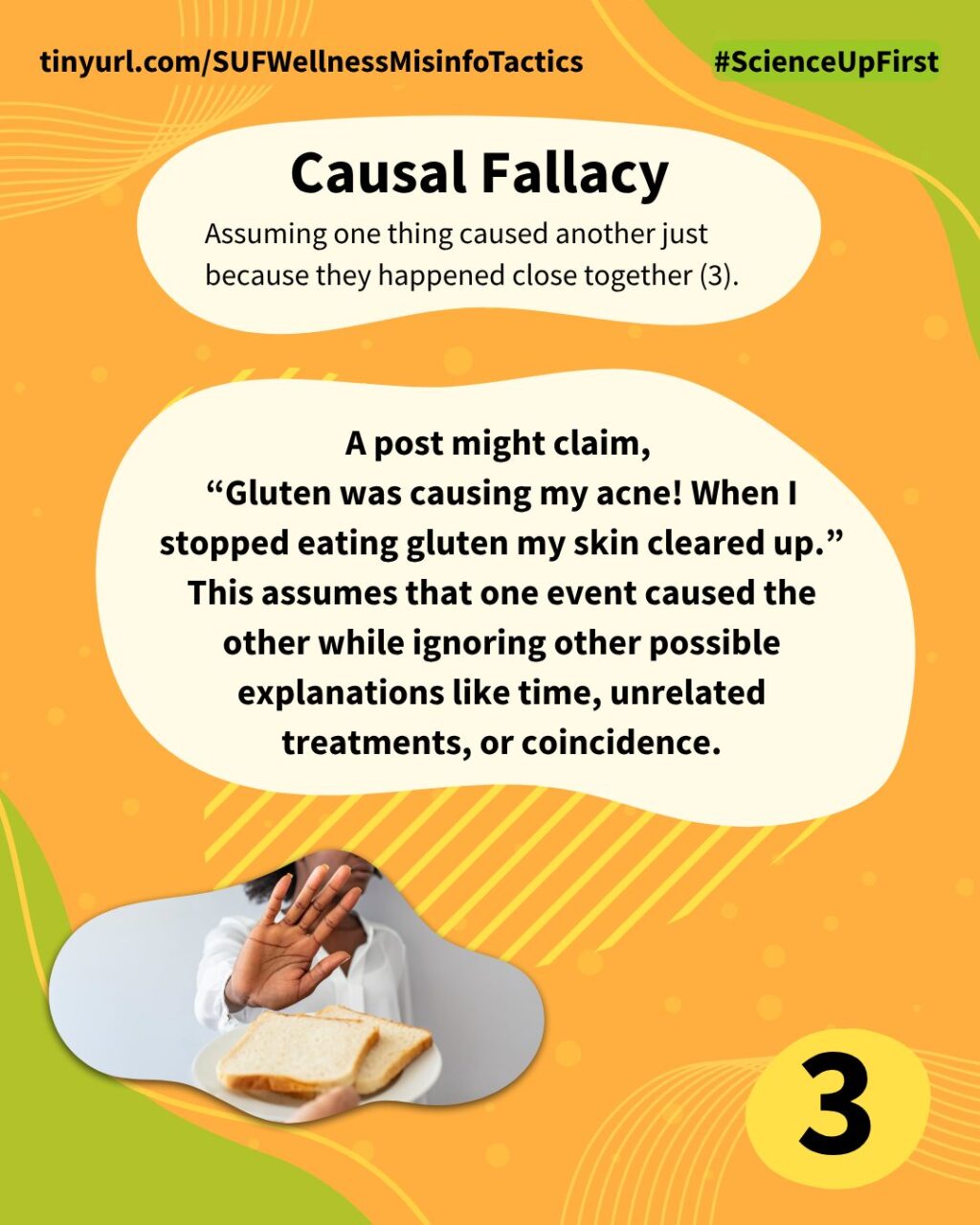

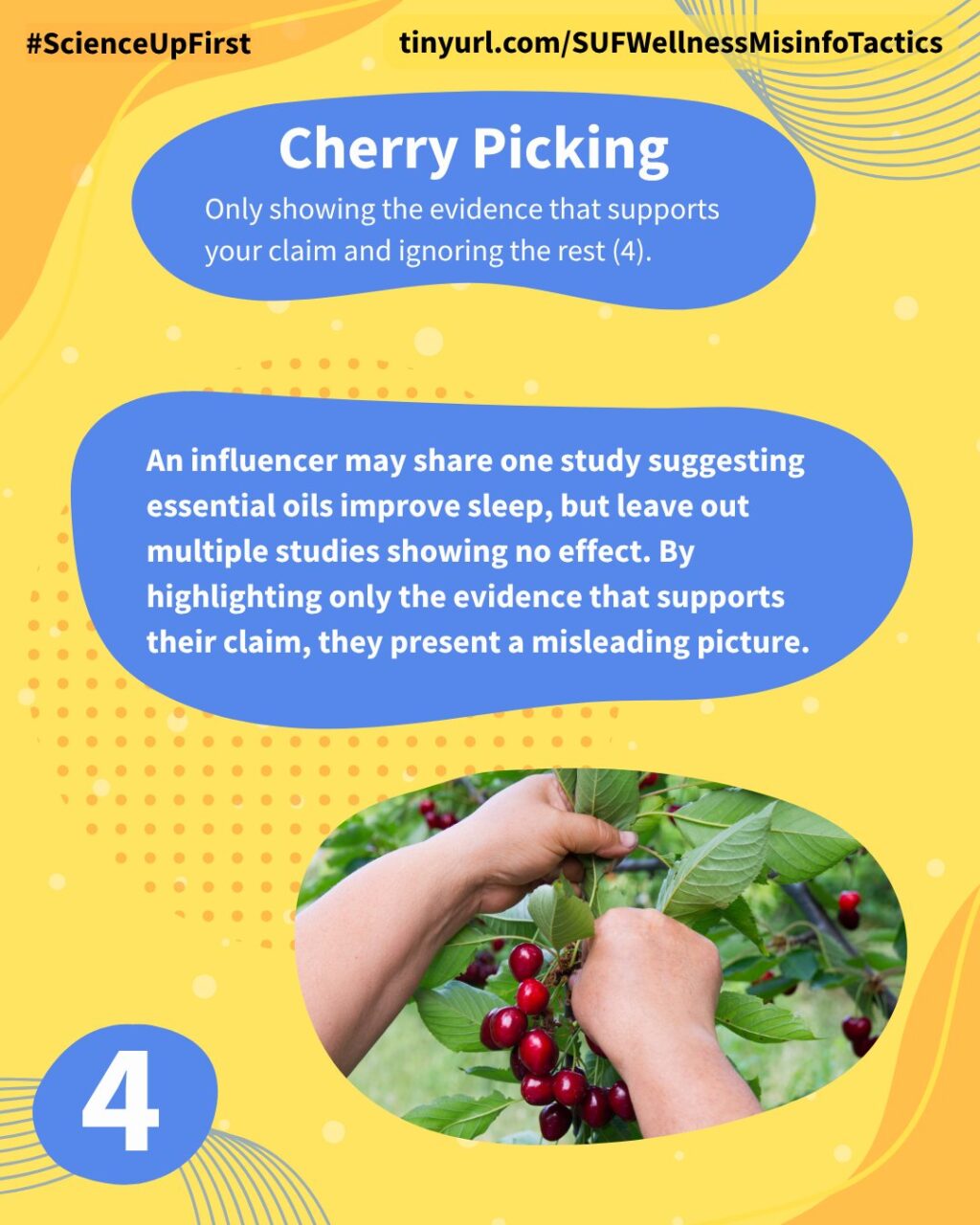

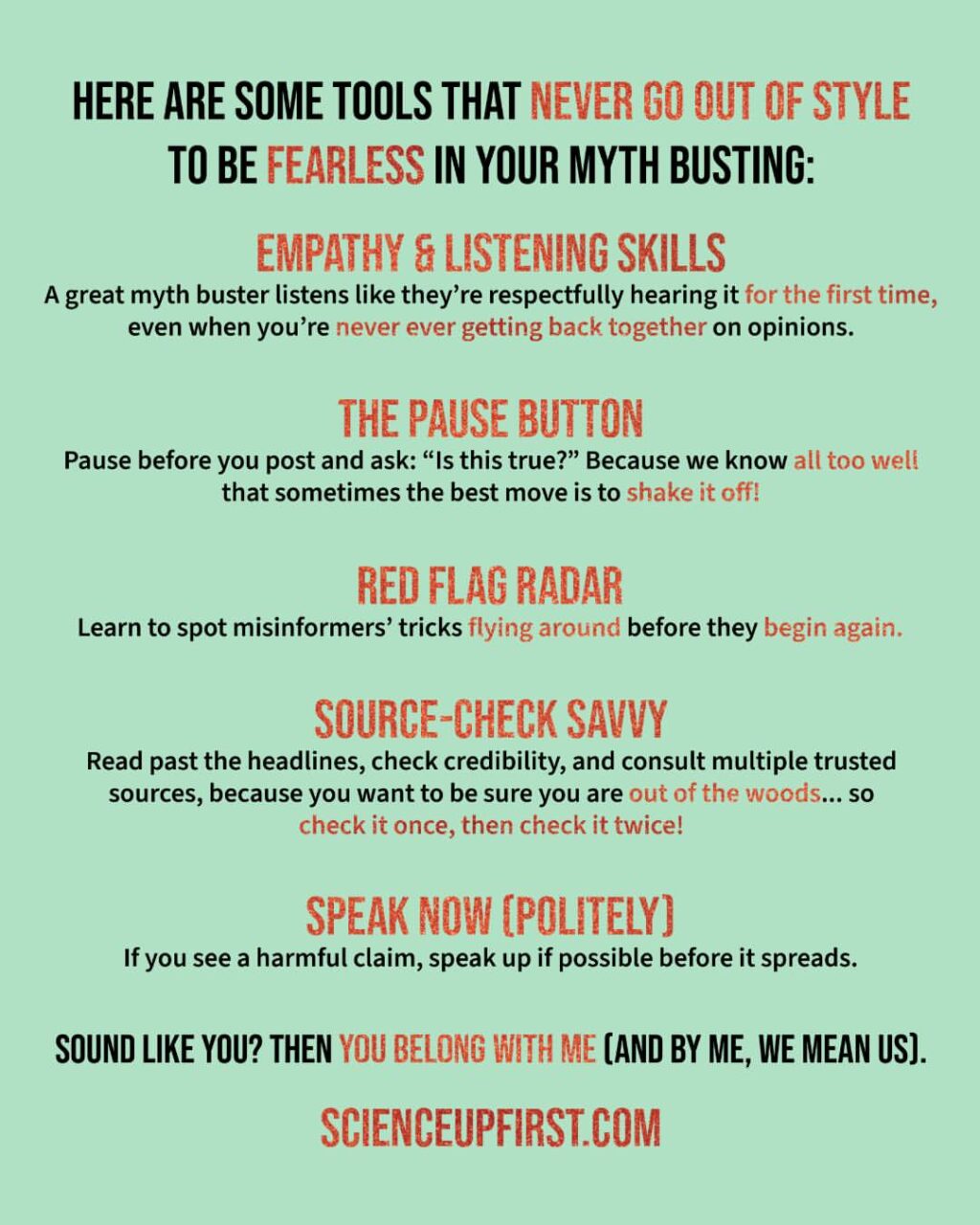

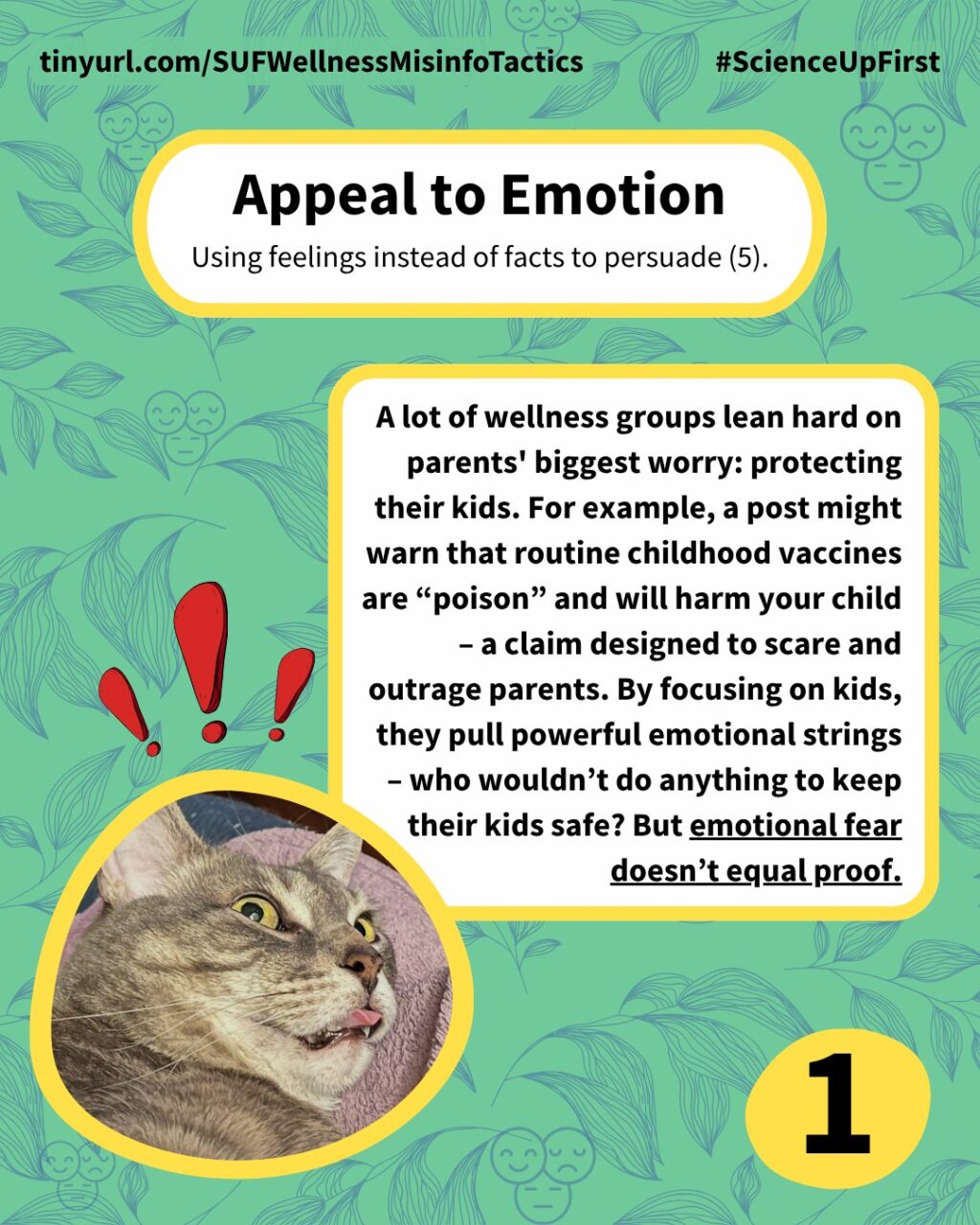

We already covered 4 misinformer tactics wellness influencers use: appeal to nature, false dichotomy, causal fallacy, and cherry-picking.

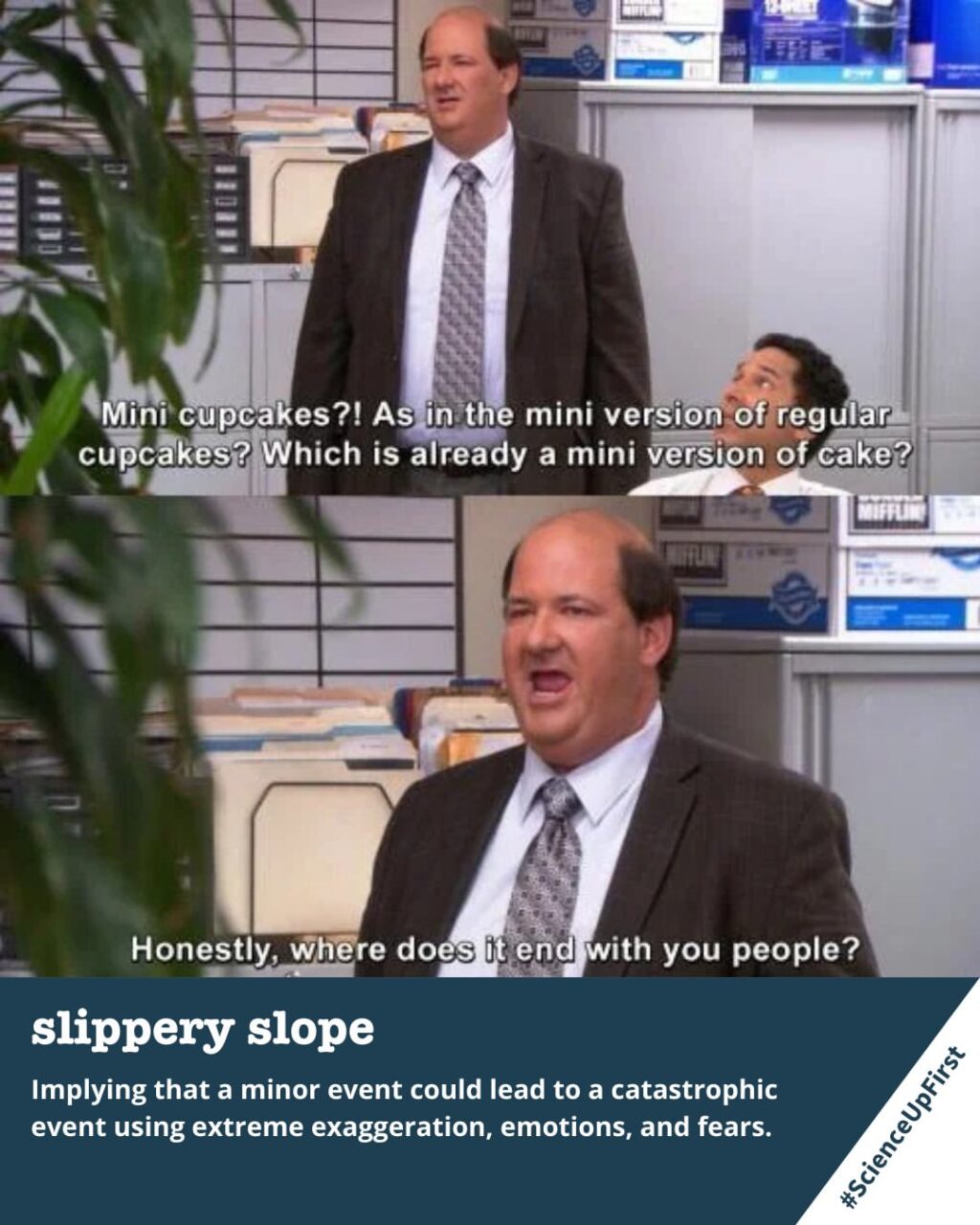

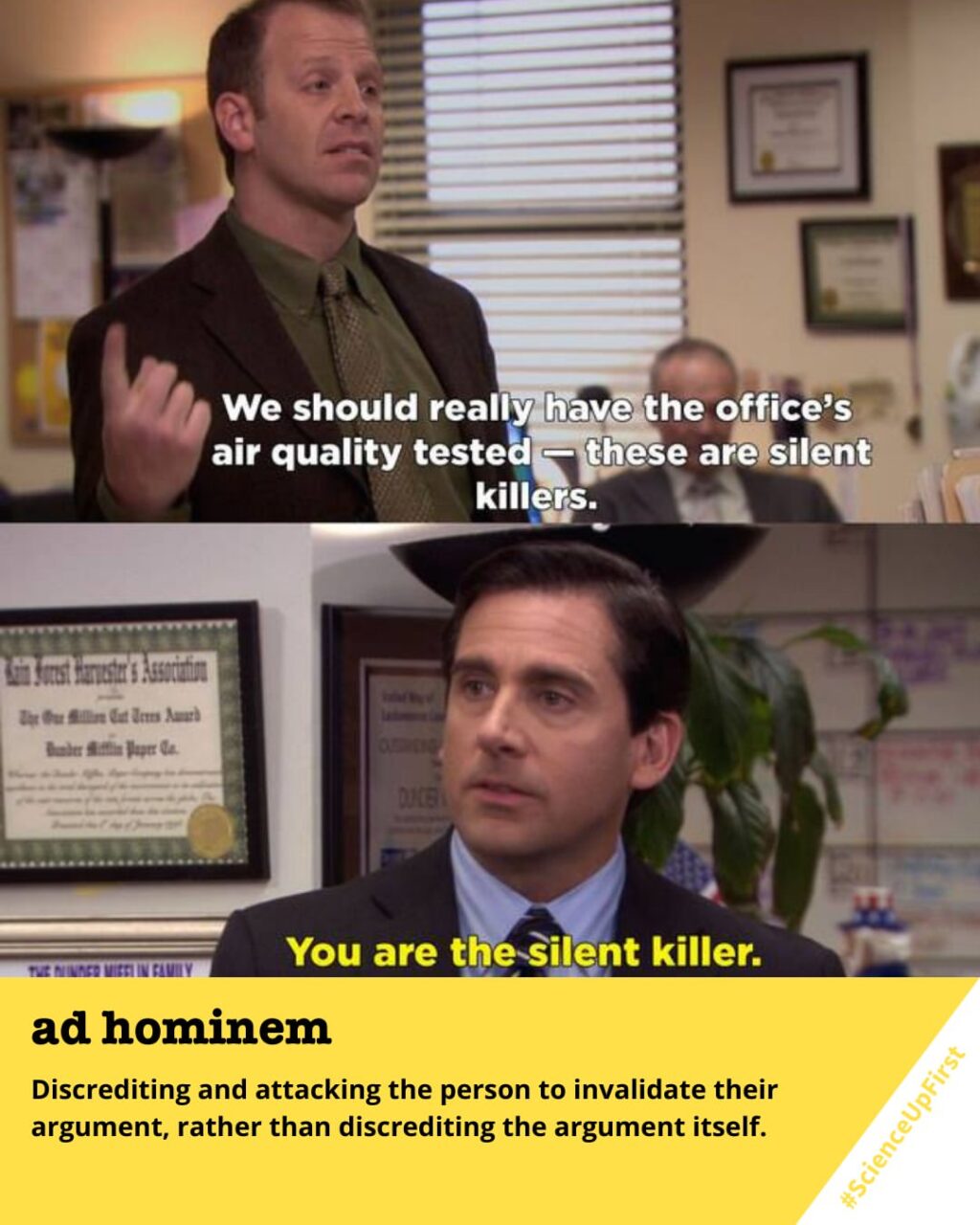

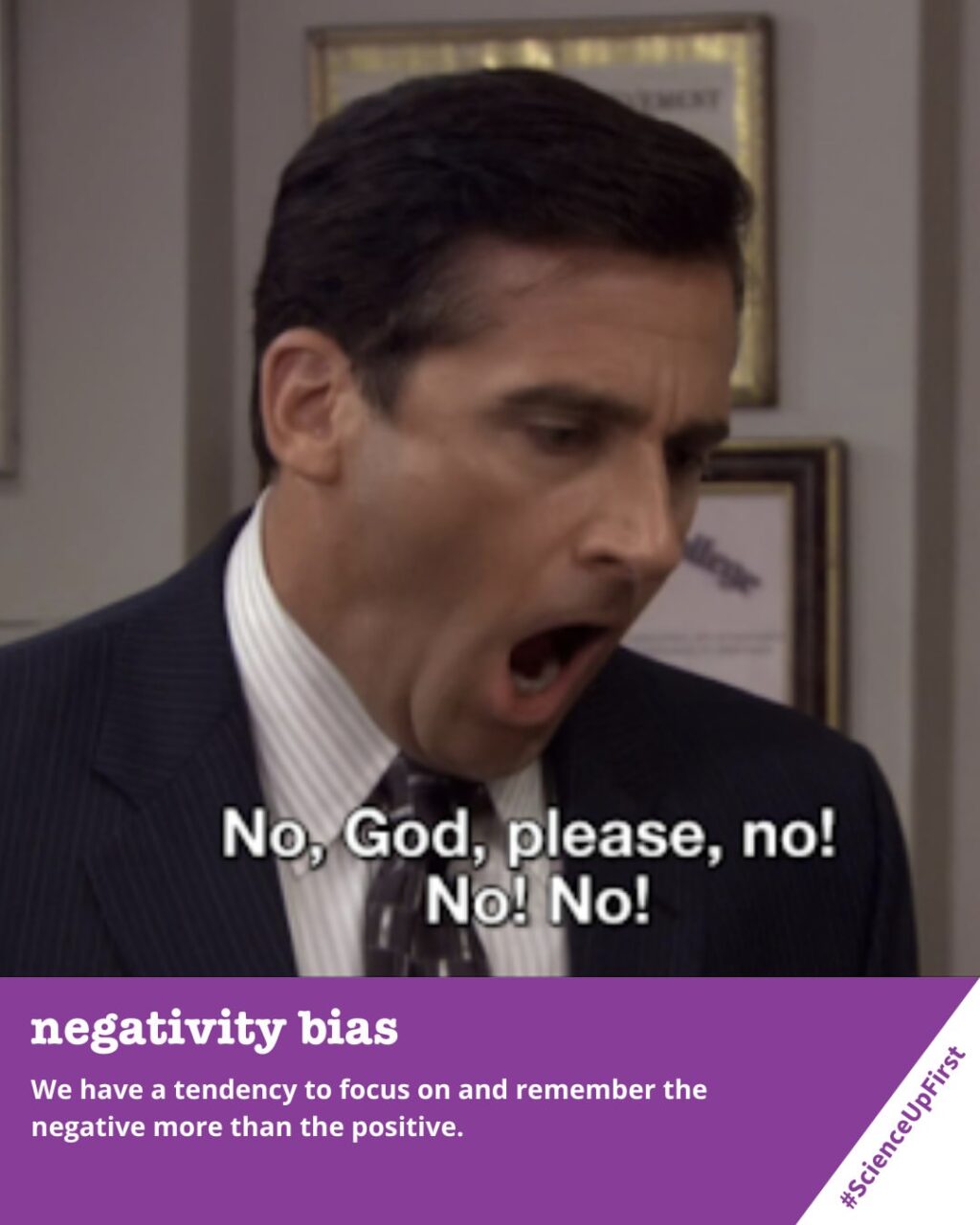

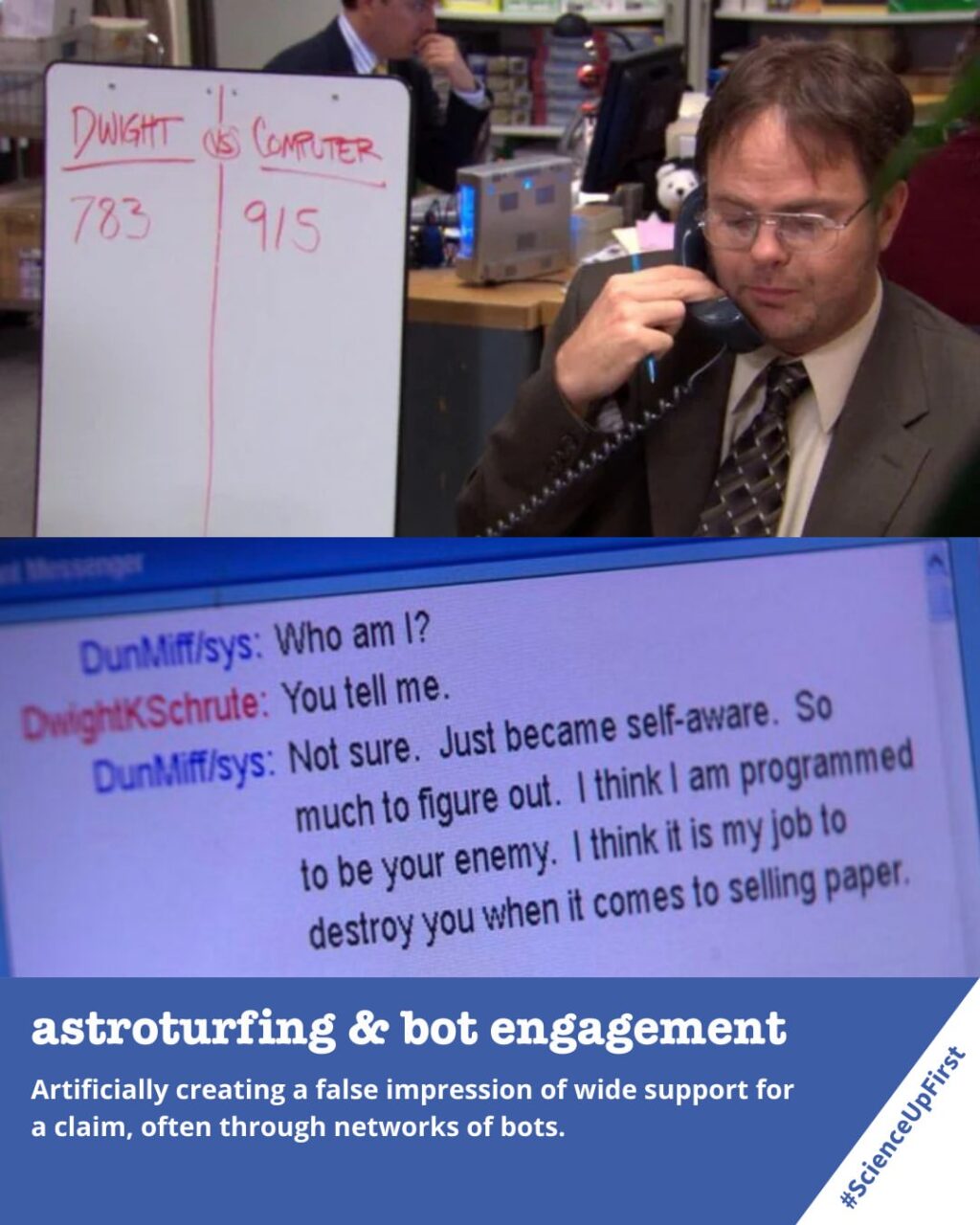

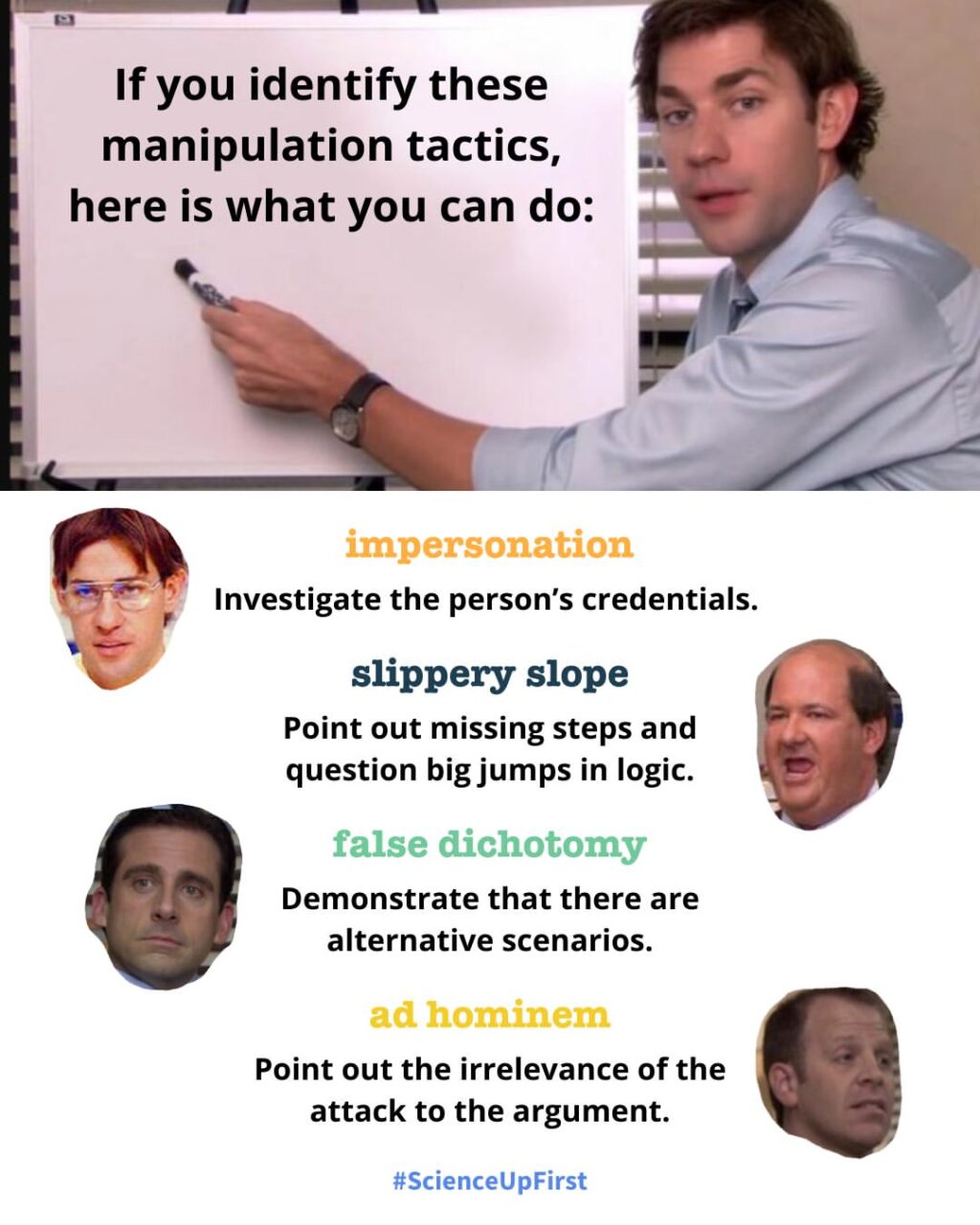

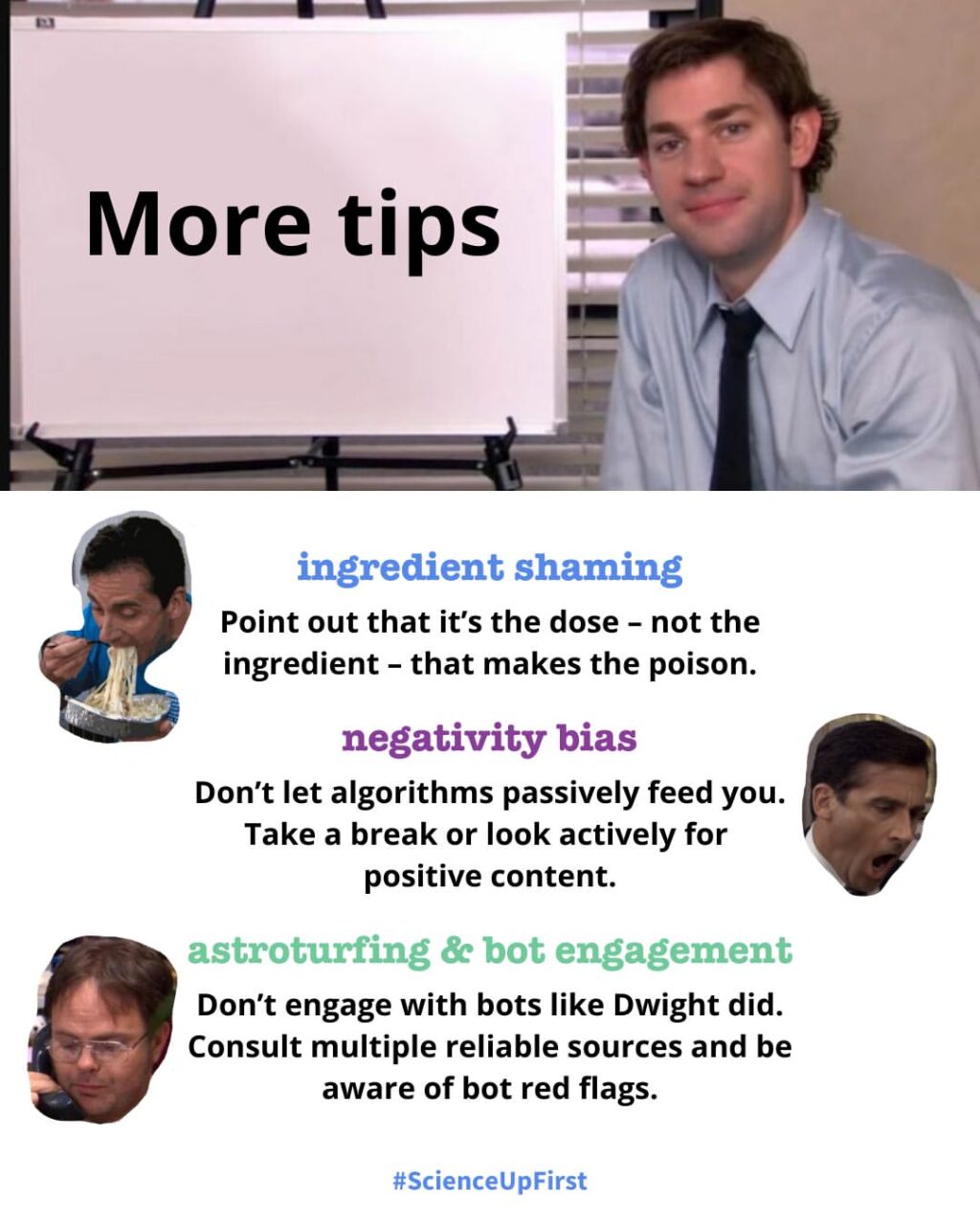

Here are 4 more to watch out for.

Have you come across these tactics in your feed? Let us know!

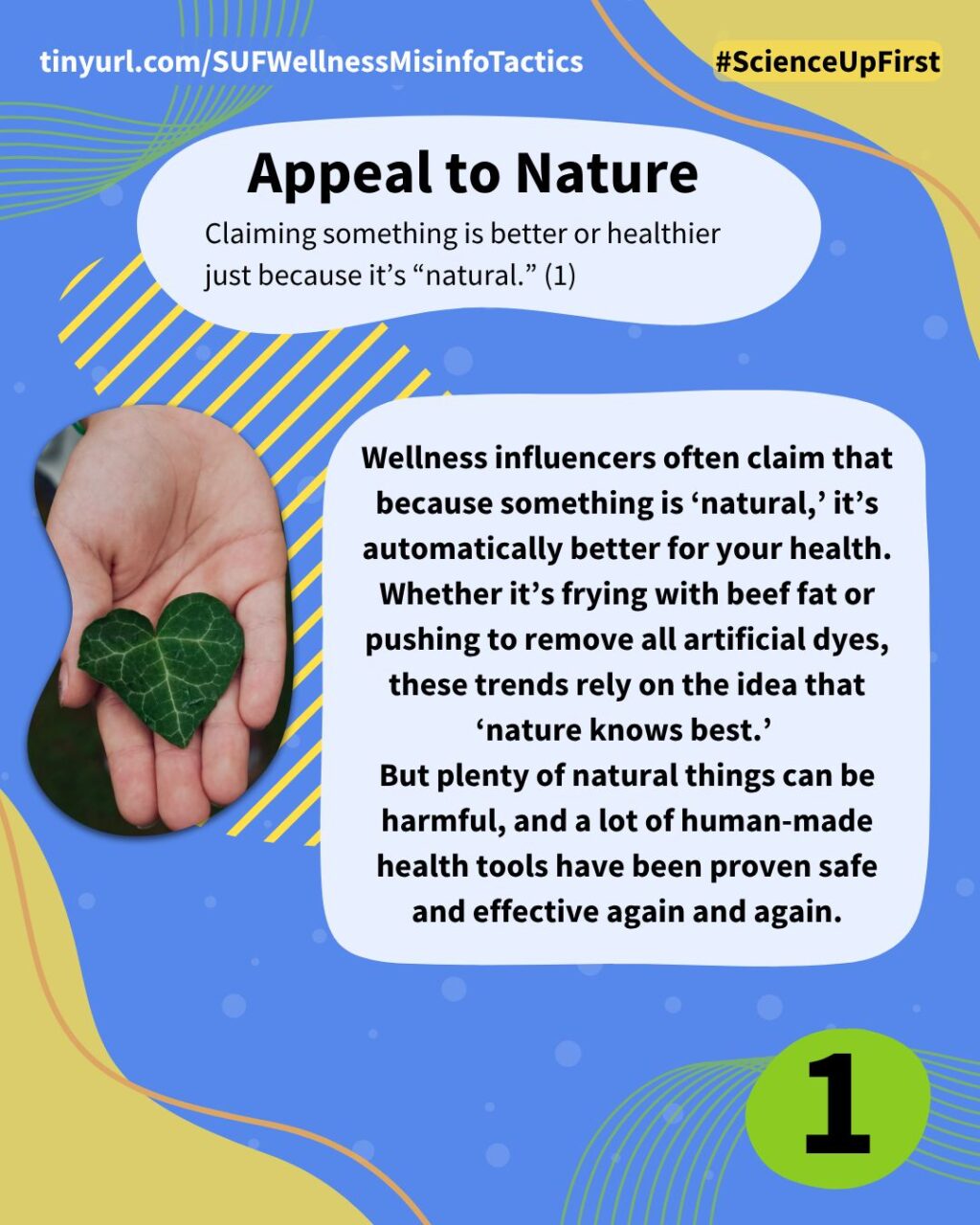

- Misinformer Tactic: Appeal to Nature | ScienceUpFirst | January 2022

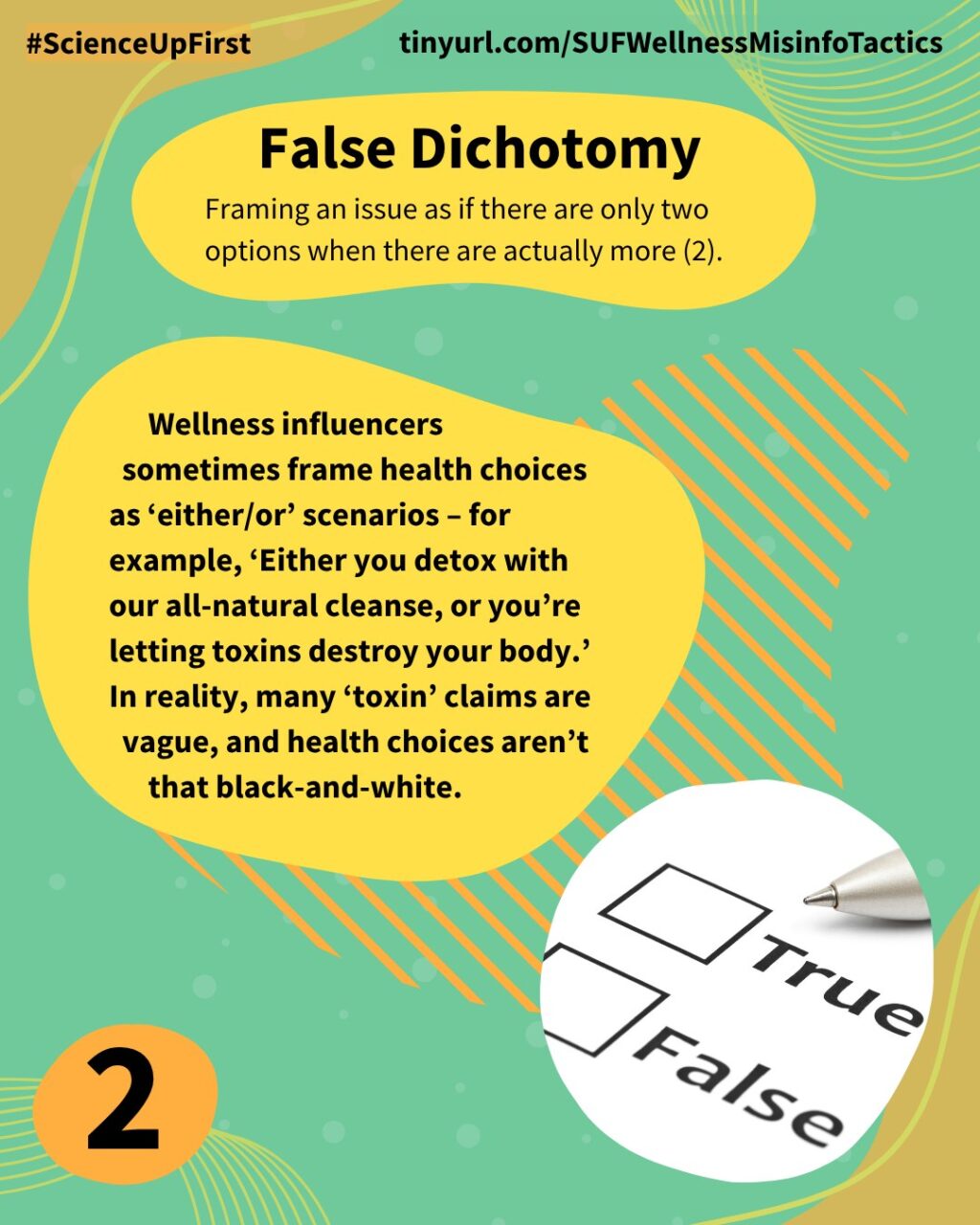

- Misinformer Tactic: False Dichotomy | ScienceUpFirst | December 2021

- Misinformer Tactic: Causal Fallacy | ScienceUpFirst | February 2024

- Misinformer Tactic: Cherry Picking | ScienceUpFirst | May 2024

- Misinformer Tactic: Stirring Up Emotions | ScienceUpFirst | July 2024

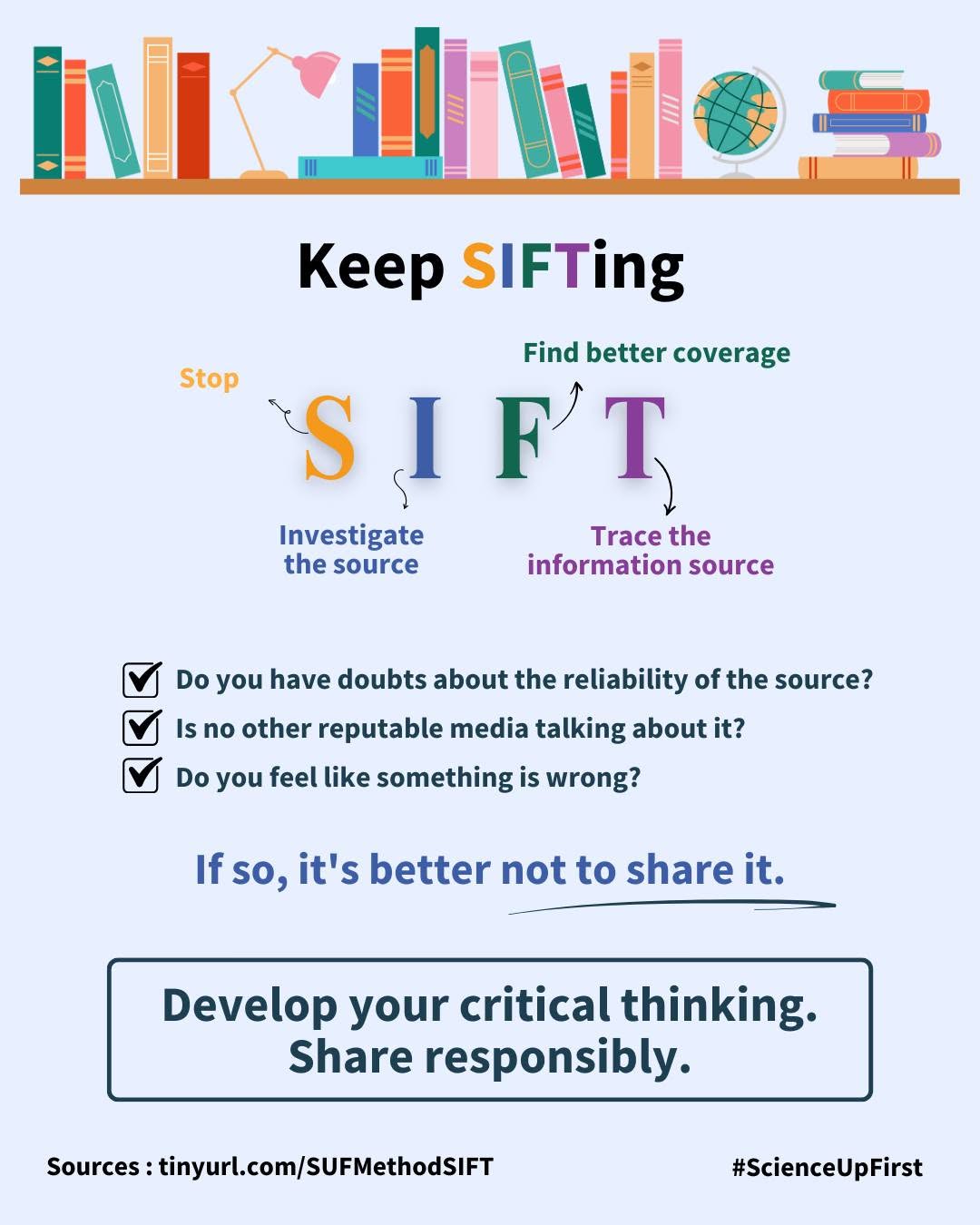

- Are you getting the full picture? | ScienceUpFirst | February 2022

- Comment from @niniandthebrain on ‘Florida reports 21 cases of E.coli infections linked to raw milk’ on Instagram | August 5, 2025

- Misinformer Tactic: Doubt Mongering | ScienceUpFirst | August 2022

- Beware the Virtuous MAHA Movement | American Council on Science and Health | July 2, 2025

- Be mindful of the Persecuted Hero Narrative | ScienceUpFirst | March 2024

Share our original Bluesky Post!

Wellness influencers often use misinformer tactics to persuade. We’ve already discussed 4 tactics in a previous post, but here are 4 more 👇 scienceupfirst.com/misinformati… #ScienceUpFirst

— ScienceUpFirst (@scienceupfirst.bsky.social) October 23, 2025 at 4:25 PM

[image or embed]

View our original Instagram Post!